Your Training Probably Won’t Prevent Nuclear War, But It Might

March 4, 2021

On September 26, 1983, the Soviet early warning system detected the launch of five intercontinental ballistic missiles (ICBM) from the United States. Rather than launch an immediate retaliation—which was the standard protocol—the Soviet commander on duty, Stanislav Petrov, determined that an attack by the United States would most likely involve an overwhelming number of ICBMs, not a handful. Petrov decided that the launch detection was a computer malfunction and did not issue orders for a nuclear counterstrike, despite not having the computer access to prove his belief. Petrov was proven correct when the American missiles did not arrive. In subsequent interviews, Petrov credited his training with providing him with the critical thinking skills to assess and judge the probabilities of the situation. Today he is recognized as the man whose clear thinking in a stressful situation helped prevent a global catastrophe.

While the consequences of poor training aren’t as high for most organizations as they could have been in this story, it’s easy to become complacent when training workers to prepare for high-stress situations. Like most organizations, the Soviet command probably had long days of not very much happening, with normal workloads, time for casual chats, and scheduled maintenance. However, when things went wrong very quickly, Petrov was able to rely on instincts honed by rigorous training to keep his wits about him and asses the situation rationally and clearly. Had Petrov relied on the high-risk biases of general “common sense” or followed an uncritical checklist approach, it’s likely that none of us would be here to read this story today.

In other situations, however, poor training that doesn’t cover high-impact/low-probability scenarios can cause serious problems. Let’s consider two recent events as examples.

Pickering Nuclear Generating Station False Alarm

On January 12, 2020, people in Ontario, Canada woke up to a blaring alarm on their mobile devices from the Alert Ready system of the Ontario Government reporting that an incident had occurred at the Pickering Nuclear Generating Station (PNGS). While the message stressed that no radiation had been released, citizens near the power plant had to wait an agonizing 108 minutes before a second alert was sent advising people that the first message had been sent in error and that all operations at PNGS were normal.

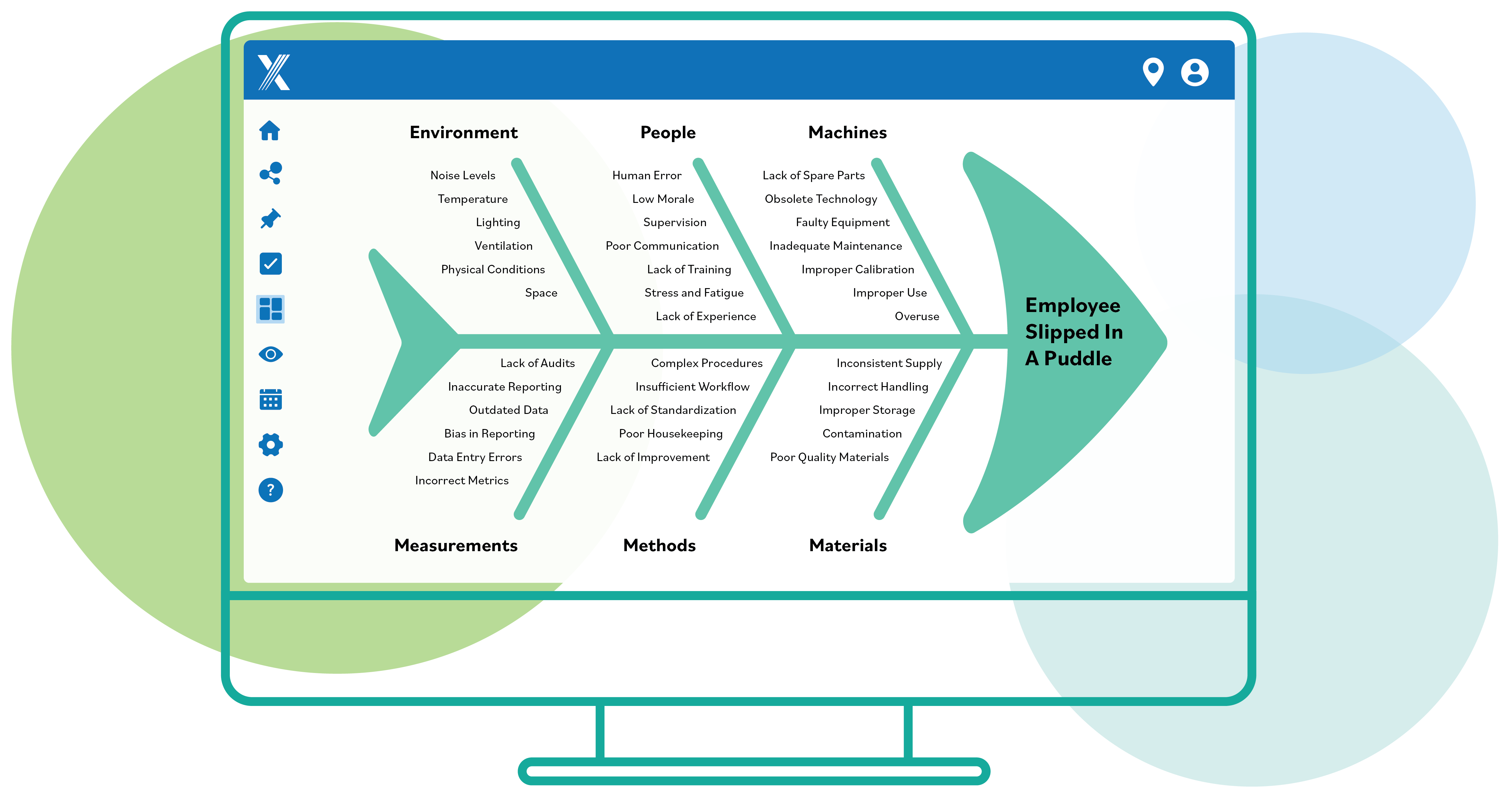

The investigation into the false alarm determined that poor training, inadequate scenario planning, and delayed communications were significant contributors. During an early-morning shift change, the Duty Officer (DO) followed standard procedure by logging in to the live Alert Ready system and then logging out to ensure they had access. They then logged into the training system to practice sending an alert, and then logged out, all according to standard procedure. Unfortunately, in this instance, the DO sent the alert from within the live system, which immediately sent the false alarm to the Alert Ready system and communicated it to everyone in the province of Ontario. While the DO recognized the error immediately and requested support from supervisors at Emergency Management Ontario (EMO), it took almost two hours for them to decide whether it was appropriate to send a second alert through the same channel, during which time the DO received no clear instructions on what to do. In fact, there was no clear, documented response for false alarms, and a great deal of tacit information about protocols relating to approvals for sending messages was not documented or built into the Alert Ready system. The investigation provided the following insights into problems relating to policy and training, technology, and communication.

| Policy and Training | Technology | Communication |

| Training for the DO in question was incomplete. It had been interrupted in November 2019 due to the activation of a provincial response to a flood. | The Alert Ready system allowed a single user to draft and send an alert. | Government officials were not immediately notified of the alert. |

| DOs were not consistently sending practice alerts on the training system, as was required by shift change procedures. | The live system and the training system could be open simultaneously. | Communications were inconsistent and done on a case-by-case basis. |

| Managers at EMO did not have access to the Alert Ready System. | The training system interface was very similar to that of the live system, which meant they could easily be mistaken for one another. | |

| The text for real alerts was identical to the text for practice alerts, with no distinctive labelling like “EXERCISE EXERCISE EXERCISE” to help users distinguish between them. |

Hawaii Ballistic Missile False Alarm

On January 13, 2018, people in Hawaii received a message on the Emergency Alert System (EAS) and the Wireless Emergency Alerts (WEA) system that read, “BALLISTIC MISSILE THREAT INBOUND TO HAWAII. SEEK IMMEDIATE SHELTER. THIS IS NOT A DRILL.” Officials sent a message through social media notifying the public that the alert was the result of a false alarm, but an official message through EAS and WEA was not sent until 38 minutes after the initial alert, during which time many residents of Hawaii believed they were on the verge of a nuclear attack.

In this instance, the worker who was responsible for sending the message did not hear the oral instructions from the supervisor that it was a test, hearing only the part of the text that read, “This is not a drill.” The previous shift supervisor had scheduled a test of the EAS and the WEA but had not adequately conveyed this to the next shift supervisor on call during the test. As a result, while the alert sent was false, the worker did not send it by mistake. Having misunderstood the poorly communicated instructions, the worker sent the alarm fully convinced that they were in a real emergency situation. In this instance, the lack of adequate scenario planning and preparation were responsible for the false alarm.

Conclusion

Taking time to train workers properly is critical for every organization. From keeping workers safe to ensuring customer satisfaction with product and services, the time spent providing, tracking, and measuring proper training is always worth the effort, even if nuclear war isn’t a likely consequence of not doing so.